News

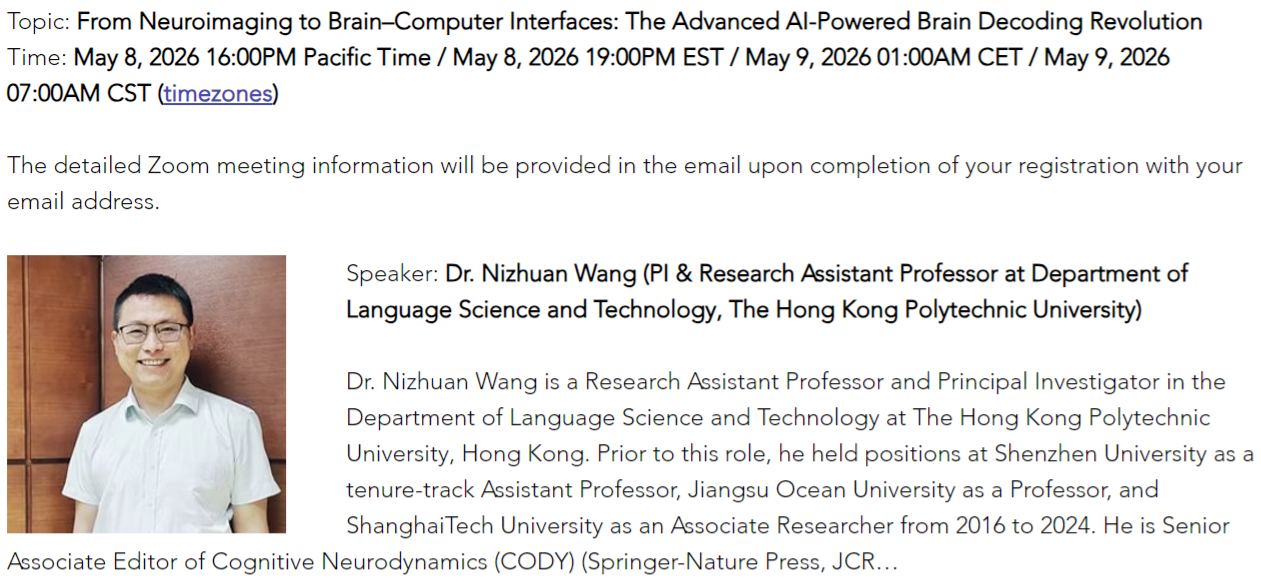

We are delighted to share that Dr. Nizhuan Wang has been invited to deliver a presentation at The Affiliated Suzhou Hospital of Nanjing University Medical School on “From Neuroimaging to Brain–Computer Interfaces: The Advanced AI-Powered Brain Decoding Revolution.”

This presentation will focus on the rapidly developing field of AI-powered brain decoding. Dr. Wang will discuss AI-powered neuroimaging computation, AI-powered brain–computer interfaces, and the broader applications of artificial intelligence in brain decoding and neuroscience research. The talk will highlight how advanced AI methods are transforming the interpretation of brain activity and opening new possibilities for neuroimaging analysis, brain–computer interfaces, computational linguistics, and neurolinguistics.

Dr. Wang is a Principal Investigator and Research Assistant Professor in the Department of Language Science and Technology at The Hong Kong Polytechnic University. His main research interests include artificial intelligence and its interdisciplinary applications, brain–computer interfaces, computational linguistics, neuroimaging, and neurolinguistics. He has published more than 90 papers in leading journals and conferences, including IEEE TMI, IEEE JBHI, Neurocomputing, Human Brain Mapping, AAAI, and MICCAI.

The talk will take place at 16:00 on June 8, 2026, in the Conference Room on the 4th Floor of the Administration Building. More information about Dr. Wang is available at:

https://www.polyu.edu.hk/lst/people/academic-staff/dr-wang-nizhuan/

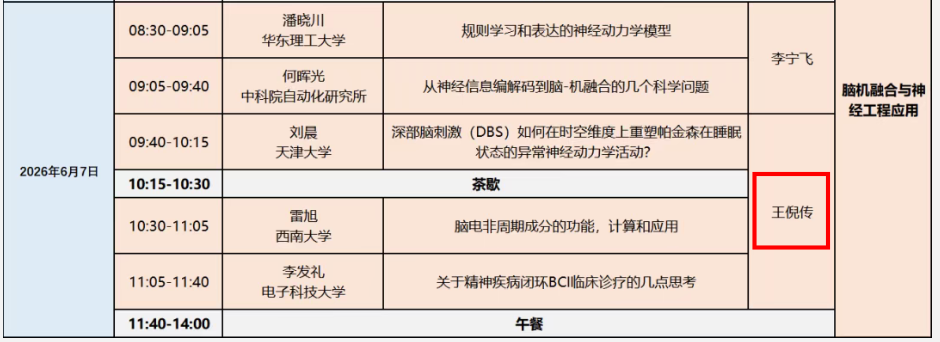

We are pleased to share that Dr. Nizhuan Wang has been invited to serve as a Session Chair at the 2026 Shanghai Brain Science Theory Seminar, to be held on June 5–7, 2026, in Shanghai, China.

Hosted by the Editorial Office of Cognitive Neurodynamics, the seminar will focus on key scientific questions in brain theory, neural dynamics, brain-inspired intelligence, neuroengineering, and AI applications.

As Session Chair, Dr. Wang will help facilitate academic discussions and exchanges among leading scholars in brain science and intelligent systems.

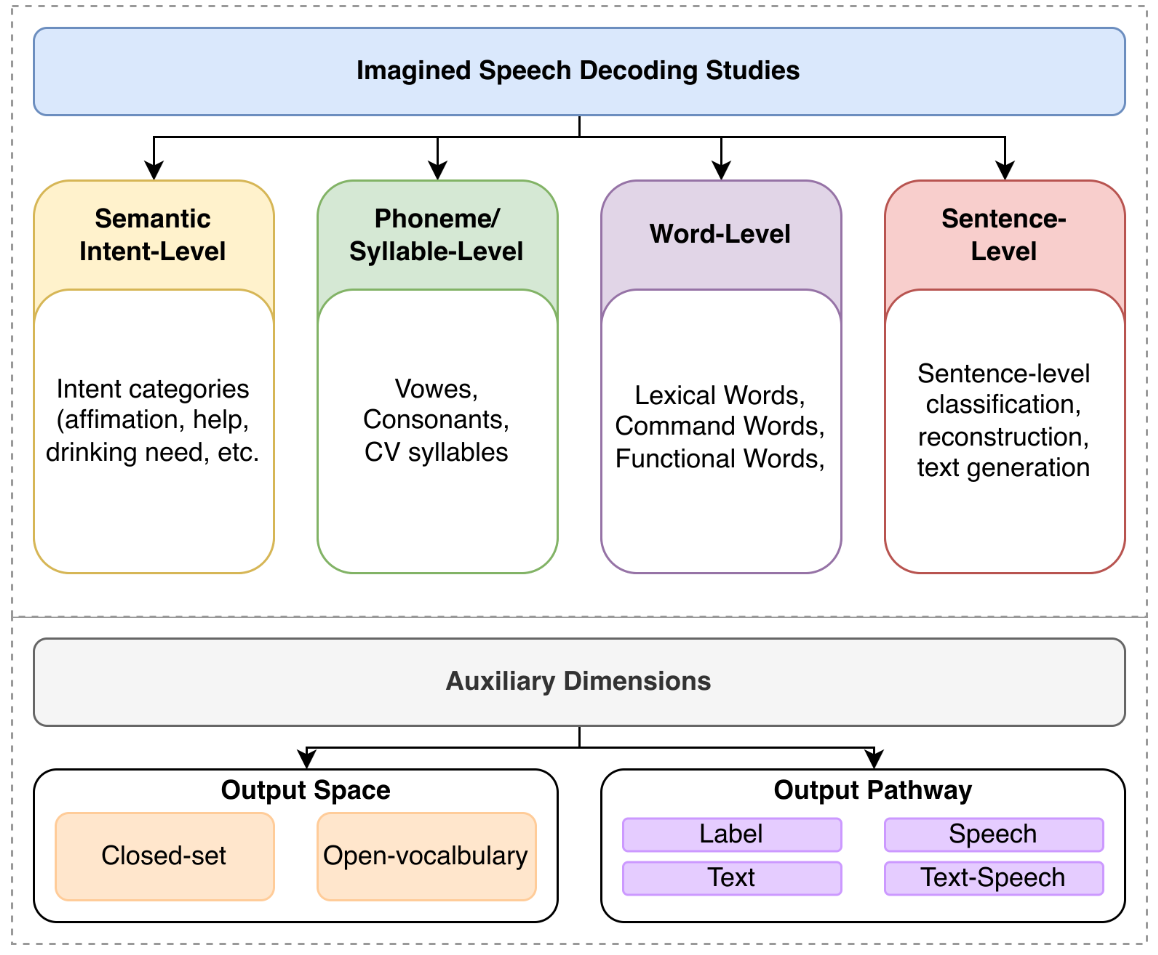

Haodong Zhang, Wai Ting Siok, Nizhuan Wang. Imagined Speech Brain–Computer Interface: A Task-Oriented Review of Neural Decoding [J]. Sensors, 2026.

Abstract:

Imagined speech decoding has attracted growing interest in brain–computer interface (BCI) research, as it may enable language-related information to be recovered from non-overt neural activity. Current studies in this area are often treated as a single, unified research problem, despite substantial differences in decoding target, output constraints, and system output forms. This review examines recent imagined speech decoding research from a task-oriented perspective, with a focus on how different neural decoding tasks are defined, constrained by their output spaces, and expressed through different output pathways. The included studies are organized into four main task levels: semantic/intent, phoneme/syllable, word, and sentence/language decoding. They are further compared along two auxiliary dimensions: output-space property and output pathway, with particular attention to closed-set and open-vocabulary settings. The review shows that current studies span markedly different linguistic granularities and communication objectives, from low-bandwidth intent recognition to text or speech reconstruction. Finally, it concludes that imagined speech should not be treated as a single homogeneous decoding problem, and that a task-oriented framework provides a clearer basis for comparing heterogeneous studies and guiding future communication-oriented BCI research.

We are delighted to share that Dr. Nizhuan Wang has been invited to deliver an online presentation at Translational-Neuro.org on “From Neuroimaging to Brain–Computer Interfaces: The Advanced AI-Powered Brain Decoding Revolution”.

This presentation will focus on the rapidly advancing field of AI-powered brain decoding. Dr. Wang will discuss AI-powered neuroimaging computation, AI-powered brain–computer interfaces, and future perspectives in AI, neuroimaging, BCI, and brain disorders. The talk will highlight how advanced artificial intelligence approaches are transforming the interpretation of brain activity and opening new possibilities for neuroimaging analysis, brain–computer interfaces, and translational neuroscience research.

Read the full event details here: Translational-Neuro.org

https://www.translational-neuro.org/event-details/from-neuroimaging-to-brain-computer-interfaces-the-advanced-ai-powered-brain-decoding-revolution

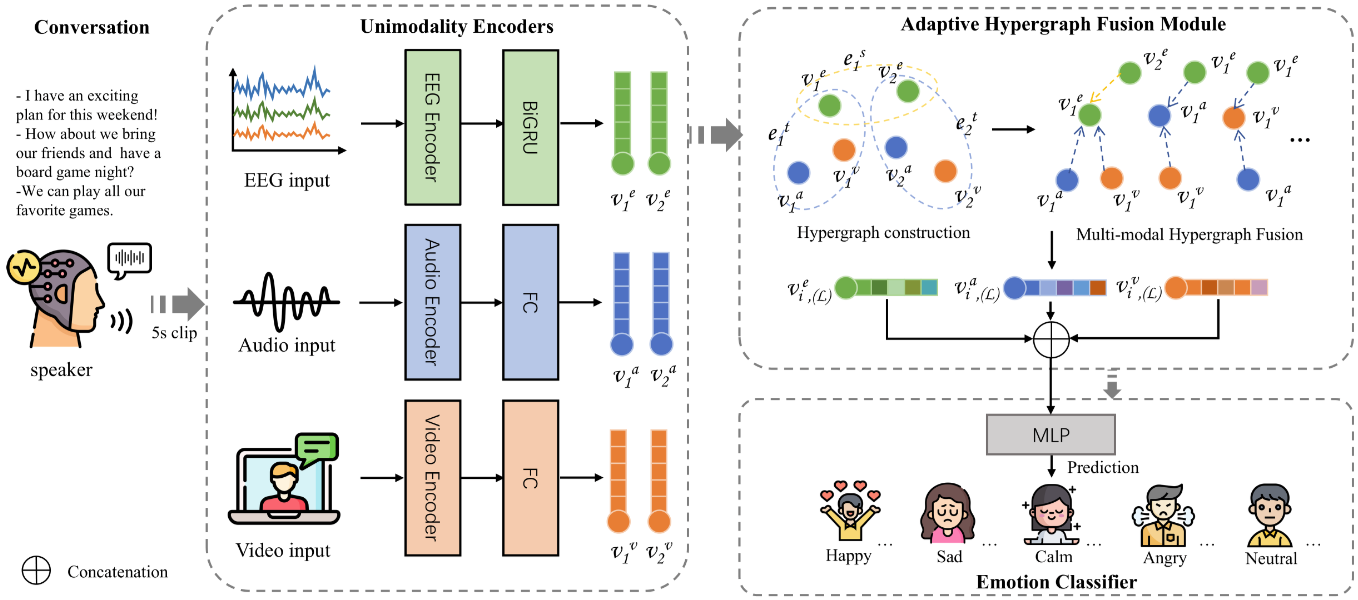

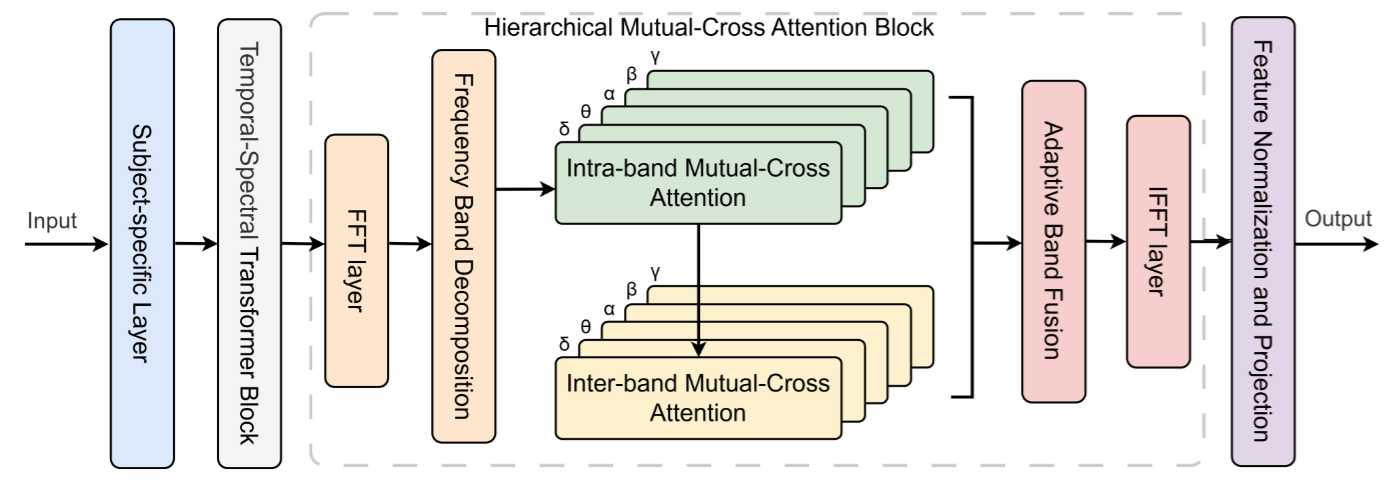

Zijian Kang*, Yueyang Li*, Shengyu Gong, Weiming Zeng, Hongjie Yan, Lingbin Bian, Zhiguo Zhang, Wai Ting Siok, Nizhuan Wang. Hypergraph multi-modal learning for eeg-based emotion recognition in conversation [J]. Neural Networks, 2026

Abstract:

Emotional Recognition in Conversation (ERC) is valuable for diagnosing health conditions such as autism and depression, and for understanding the emotions of individuals who struggle to express their feelings. Current ERC methods primarily rely on semantic, audio and visual data but face significant challenges in integrating physiological signals such as Electroencephalography (EEG). This research proposes Hypergraph Multi-Modal Learning (Hyper-MML), a novel framework for identifying emotions in conversation. Hyper-MML effectively integrates EEG with audio and video information to capture complex emotional dynamics. Firstly, we introduce an Adaptive Brain Encoder with Mutual-cross Attention (ABEMA) module for processing EEG signals. This module captures emotion-relevant features across different frequency bands and adapts to subject-specific variations through hierarchical mutual-cross attention mechanisms. Secondly, we propose an Adaptive Hypergraph Fusion Module (AHFM) to actively model the higher-order relationships among multi-modal signals in ERC. Experimental results on the EAV and AFFEC datasets demonstrate that our Hyper-MML model significantly outperforms current state-of-the-art methods. The proposed Hyper-MML can serve as an effective communication tool for healthcare professionals, enabling better engagement with patients who have difficulty expressing their emotions. The official implementation codes are available at https://github.com/NZWANG/Hyper-MML.

We are pleased to share that the Department of Language Science and Technology at The Hong Kong Polytechnic University will host Prof. Yi Guo from Southern University of Science and Technology for an LST Research Seminar on 29 April 2026 (Wednesday).

In this talk, titled “EEG-Based Brain Function Assessment and Precision Neuromodulation,” Prof. Guo will present how high-density EEG can be transformed from a routine recording tool into an actionable platform for functional brain assessment, cognitive risk stratification, and personalized neuromodulation. Using the development of the Electroencephalography-based Cognitive Decline Screening System (E-CDS) as an example, he will demonstrate how spectral, connectivity, and network-level EEG markers can support the early detection of cognitive decline, differential assessment across neuropsychiatric disorders, and monitoring of treatment response.

The talk will also discuss the closed-loop integration of EEG and transcranial magnetic stimulation (TMS) for precision intervention in cognitive and affective disorders, highlighting the potential of advanced neurotechnology in both research and clinical applications.

Prof. Yi Guo, MD, PhD, is Chief Brain Health Expert at United Family Healthcare, Director of the Functional Neuroscience Center at Tianjin Huanhu Hospital, and Professor and Doctoral Supervisor at Southern University of Science and Technology. He is a senior neurologist specializing in cerebrovascular disease, cognitive impairment, affective disorders, neuromodulation, and brain-computer interface translation. He pioneered EEG-based functional brain network research in China and led the development of both the E-CDS and an integrated high-density EEG-TMS platform for precision neuromodulation.

Date: 29 April 2026 (Wednesday)

Time: 15:30–16:30 (HKT)

Venue: HHB106, Hunghom Bay Campus, PolyU

Zoom Meeting ID: 925 2441 8249

Password: 557055

Registration / Online Access: Please scan the QR code on the poster to join via Zoom.

We are delighted to share that the SIOK LAB of the Department of Language Sciences and Technology at The Hong Kong Polytechnic University was invited to participate in the PolyU Alumni Day showcase. At the event, our laboratory presented NeuroVoice, a non-invasive intelligent speech brain-computer interface system developed entirely in-house with full proprietary intellectual property.

NeuroVoice is designed to help restore natural communication for individuals with speech impairments. It integrates a non-invasive wearable device with an intelligent analysis platform to monitor language-related brain activity, interpret neural signals, and translate them into speech and text in real time through an AI-powered model.

During the showcase, many volunteers enthusiastically experienced the NeuroVoice system, which received highly positive feedback from scholars, teachers, students, and alumni alike. We are sincerely grateful for the long-standing support from the University, the Faculty, and the Department, as well as for the tireless dedication of our team members, whose hard work made this presentation possible. We would also like to extend our special thanks to the Dean of the Faculty of Humanities, Dean Hu, for the continued attention and support given to our research.

The showcase also brought us many valuable suggestions from users, all of which our team greatly appreciates. We will continue refining the system and strive to launch an upgraded version of NeuroVoice in the near future, with the goal of better serving individuals with speech impairments.

We are pleased to share that our lab will host Prof. Yang Yang (Associate Professor, Institute of Psychology, Chinese Academy of Sciences) for a UBSN Research Seminar under the UBSN Capacity Building Scheme: Inbound Scheme at The Hong Kong Polytechnic University on 17 April 2026 (Friday).

In this talk, “How the Brain Learns to Read and Write – And Why Some Struggle,” Prof. Yang will present recent findings on the developmental and evolutionary mechanisms of Chinese handwriting and reading based on functional and structural MRI studies. He will discuss how handwriting development is accompanied by focal functional specialization, increasing functional lateralization, and dynamic reconfiguration of cognitive, sensorimotor, and visual networks. He will also introduce cross-species evidence from humans and macaques that highlights the anatomical similarity and functional evolution of Exner’s area, a shared brain locus involved in both reading and writing.

In the second part of the talk, Prof. Yang will discuss the neural basis of writing deficits and their relationship to reading impairments in developmental dyslexia. He will present findings showing that children with dyslexia exhibit abnormalities in both regional activation and functional connectivity during handwriting, and that reduced activation in the left supplementary motor area and the right precuneus is linked to impairments in both handwriting and reading. He will also introduce a digital handwriting-based training program that significantly improves writing and reading skills, with transfer effects on attention abilities.

• Date: 17 April 2026 (Friday)

• Time: 11:00 am–12:00 noon

• Venue: Room PQ303, PolyU

• Registration: Please register via the QR code on the poster.

We warmly welcome students and colleagues to join us!

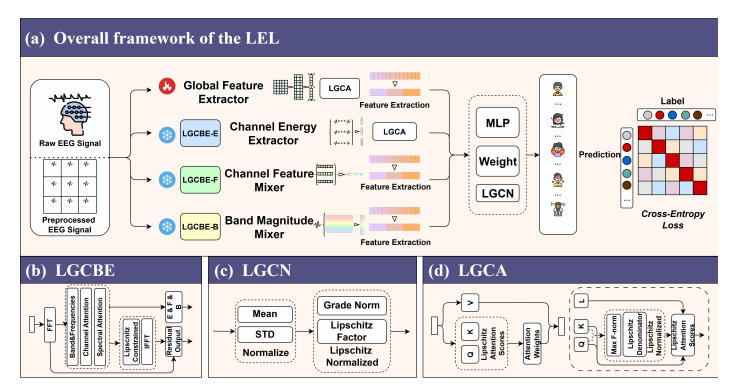

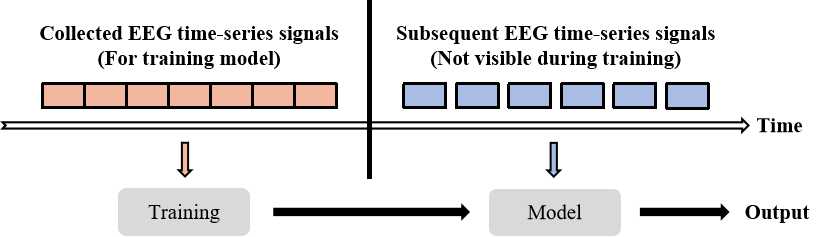

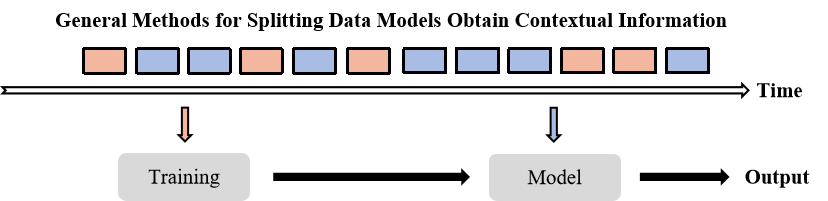

S Gong, Y Li, Z Kang, B Chai, W Zeng, H Yan, Z Zhang, WT Siok, N Wang. LEREL: Lipschitz Continuity-Constrained Emotion Recognition Ensemble Learning For Electroencephalography [J]. IEEE Sensors Journal, 2026

Abstract:

Accurate and efficient recognition of emotional states is critical for human social functioning, and impairments in this ability are associated with significant psychosocial difficulties. While electroencephalography (EEG) offers a powerful tool for objective emotion detection, existing EEG-based Emotion Recognition (EER) methods suffer from three key limitations: (1) insufficient model stability, (2) limited accuracy in processing high-dimensional nonlinear EEG signals, and (3) poor robustness against intra-subject variability and signal noise. To address these challenges, we introduce Lipschitz continuity-constrained Ensemble Learning (LEL), a novel framework that enhances EEG-based emotion recognition by enforcing Lipschitz continuity constraints on Transformer-based attention mechanisms, spectral extraction, and normalization modules. This constraint ensures model stability, reduces sensitivity to signal variability and noise, and improves generalization capability. Additionally, LEL employs a learnable ensemble fusion strategy that optimally combines decisions from multiple heterogeneous classifiers to mitigate single-model bias and variance. Extensive experiments on three public benchmark datasets (EAV, FACED, and SEED) demonstrate superior performance, achieving average recognition accuracies of 74.25%, 81.19%, and 86.79%, respectively. The official implementation codes are available at https://github.com/NZWANG/LEL.

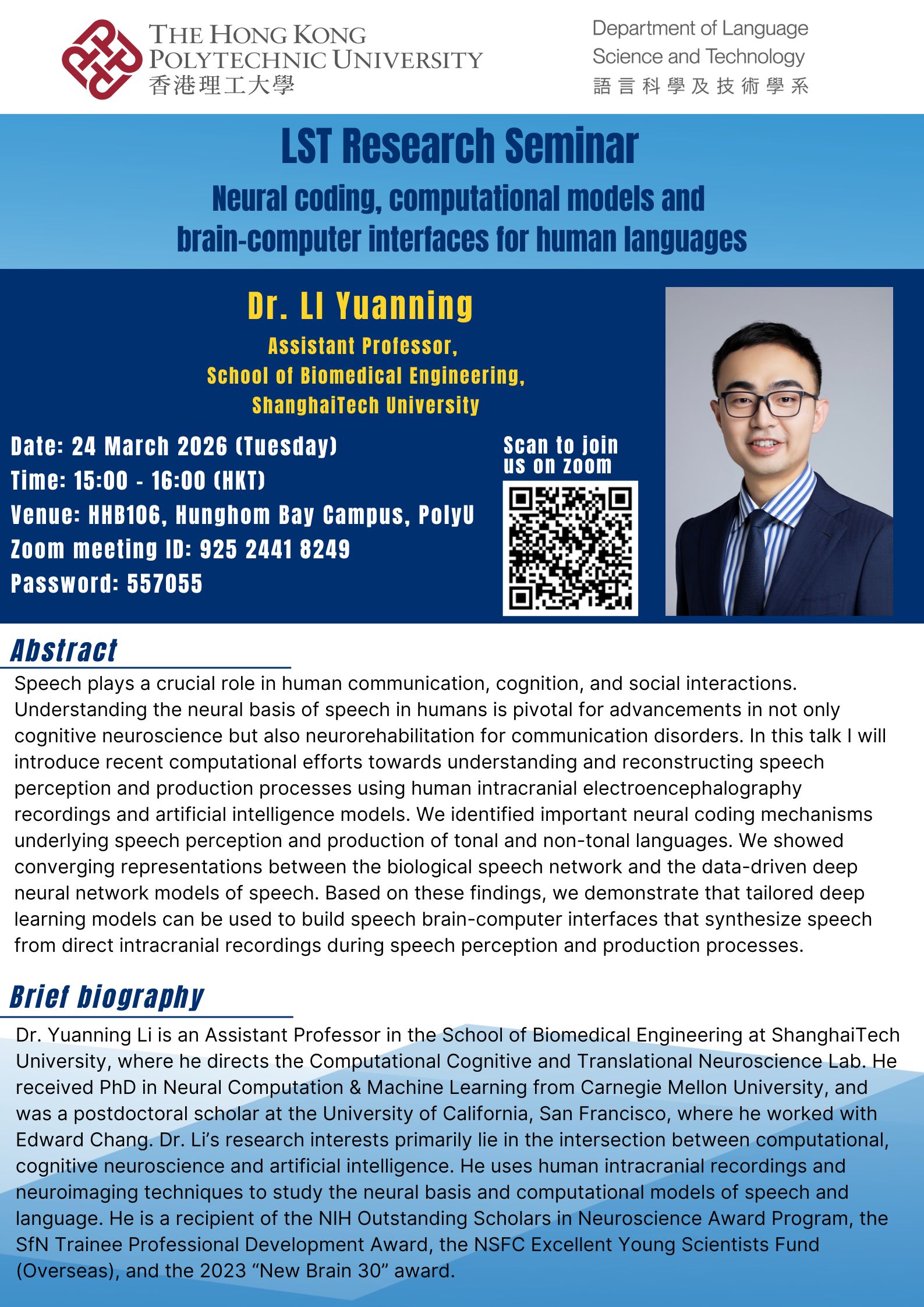

We are pleased to share that our lab will host Dr. Yuanning Li (Assistant Professor, School of Biomedical Engineering, ShanghaiTech University) for an LST Research Seminar at The Hong Kong Polytechnic University on 24 March 2026 (Tuesday).

We are pleased to share that our lab will host Dr. Yuanning Li (Assistant Professor, School of Biomedical Engineering, ShanghaiTech University) for an LST Research Seminar at The Hong Kong Polytechnic University on 24 March 2026 (Tuesday).

In this talk, “Neural coding, computational models and brain-computer interfaces for human languages,” Dr. Li will introduce recent computational efforts to understand and reconstruct speech perception and production using human intracranial electrophysiology recordings and AI models. He will also discuss converging representations between biological speech networks and deep neural network models, and how tailored deep learning models can enable speech brain–computer interfaces that synthesize speech directly from intracranial signals.

- Date: 24 March 2026 (Tue)

- Time: 15:00–16:00 (HKT)

- Venue: HHB106, Hung Hom Bay Campus, PolyU

- Zoom: Meeting ID 925 2441 8249 | Password 557055 (or scan the QR code on the poster)

We warmly welcome students and colleagues to join us onsite or online!

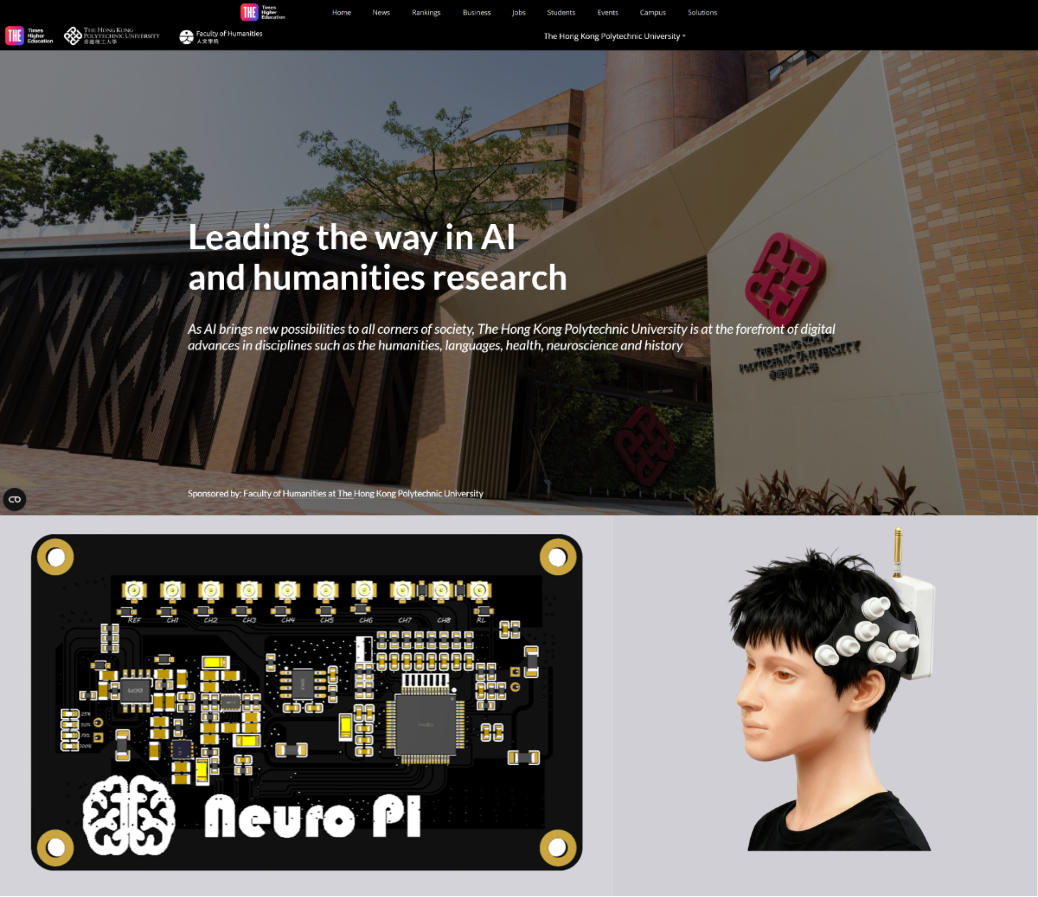

We are delighted to share that NeuroVoice has been featured on Times Higher Education (THE) in a story on “Leading the way in AI and humanities research”

NeuroVoice is a brain–computer interface application designed to enhance communication for individuals with speech impairments. It integrates a wearable device that monitors language-related brain regions with an analysis platform for interpretation and real-time visualisation, and can decode neural activity to “translate” it into speech and text via an AI-based model.

Read the full feature here: The Hong Kong Polytechnic University | Times Higher Education (THE)

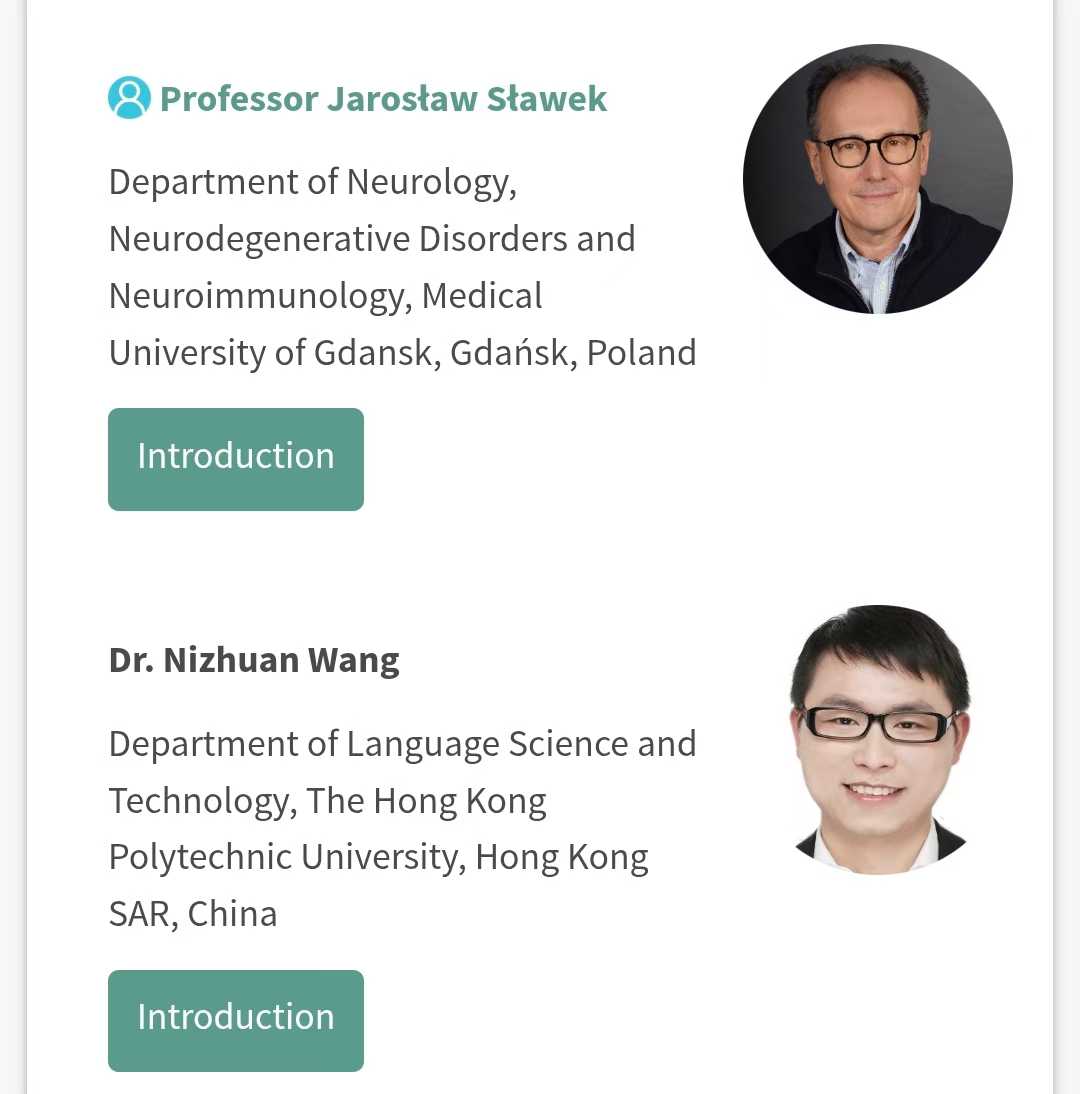

It is delighted to announce that Dr. WANG Nizhuan has been warmly invited by Prof. Woon-Man Kung to deliver an invited talk at The 5th International Electronic Conference on Brain Sciences & 1st International Electronic Conference on Neurosciences (IECBS-IECNS 2026), which will be held online on March 9–11, 2026.

During the conference, Dr. WANG Nizhuan will present a comprehensive analysis to experts, scholars, and colleagues worldwide, highlighting the current landscape, key challenges, and future directions of single-channel EEG-based brain-computer interfaces.

The talk title is: “Single-Channel EEG-Based Brain-Computer Interfaces: Current Landscape and Future Directions”. He looks forward to meeting everyone at IECBS-IECNS 2026.

For more information about the conference and the speaker session, please visit: https://sciforum.net/event/IECBS-IECNS2026?section=#event_speakers

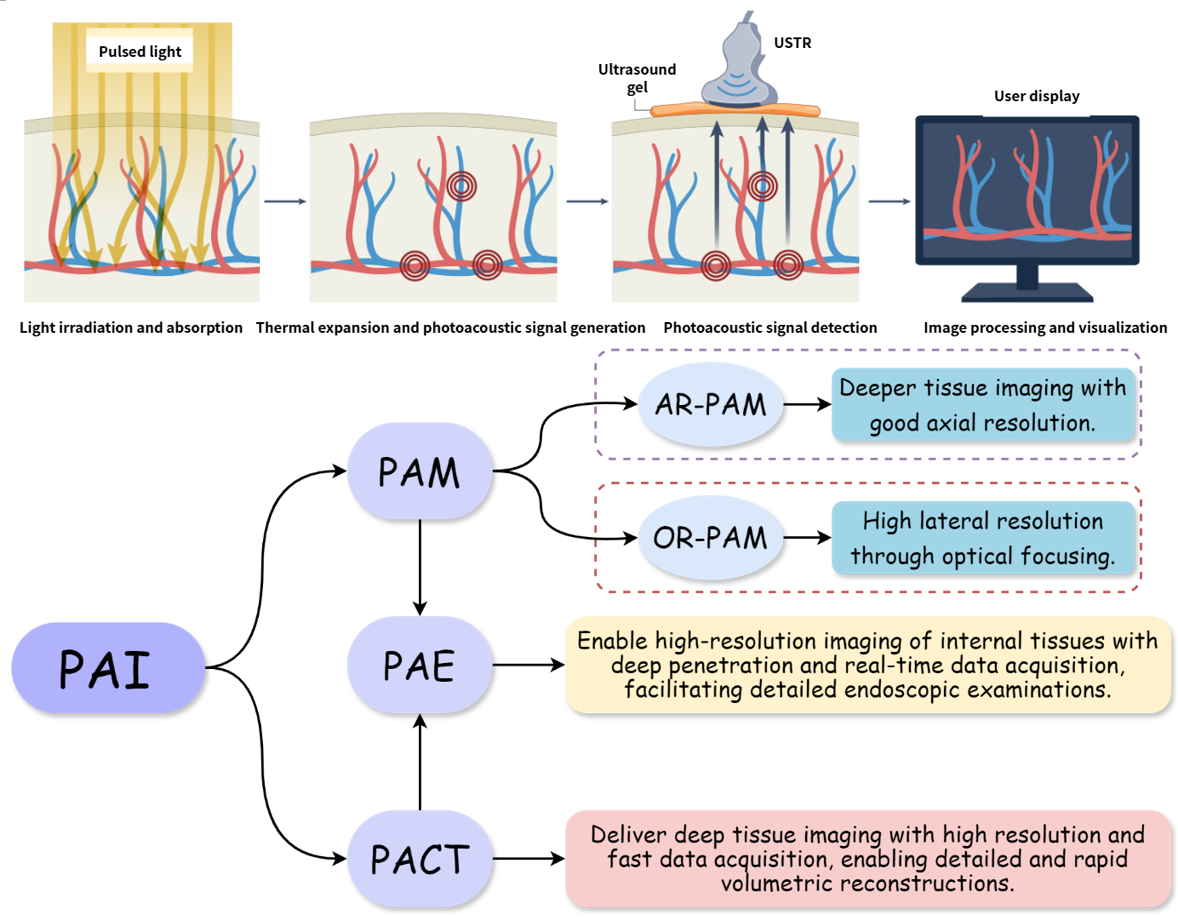

Lei Wang, Weiming Zeng, Kai Long, Hongyu Chen, Rongfeng Lan, Li Liu, Wai Ting Siok, Nizhuan Wang. Advances in Photoacoustic Imaging Reconstruction and Quantitative Analysis for Biomedical Applications [J]. Visual Computing for Industry, Biomedicine, and Art, 2025

Abstract:

Photoacoustic imaging (PAI), a modality that combines the high contrast of optical imaging with the deep penetration of ultrasound, is rapidly transitioning from preclinical research to clinical practice. However, its widespread clinical adoption faces challenges such as the inherent trade-off between penetration depth and spatial resolution, along with the demand for faster imaging speeds. This review comprehensively examines the fundamental principles of PAI, focusing on three primary implementations: photoacoustic computed tomography (PACT), photoacoustic microscopy (PAM), and photoacoustic endoscopy (PAE). It critically analyzes their respective advantages and limitations to provide insights into practical applications. The discussion then extends to recent advancements in image reconstruction and artifact suppression, where both conventional and deep learning (DL)-based approaches have been highlighted for their role in enhancing image quality and streamlining workflows. Furthermore, this work explores progress in quantitative PAI, particularly its ability to precisely measure hemoglobin concentration, oxygen saturation, and other physiological biomarkers. Finally, this review outlines emerging trends and future directions, underscoring the transformative potential of DL in shaping the clinical evolution of PAI.

It is delighted to announce that Dr. Wang Nizhuan has been elected as Senior Associate Editor of Cognitive Neurodynamics (CODY), a prestigious hybrid journal published by Springer Nature.

Founded in 2007, Cognitive Neurodynamics has established itself as a key academic platform in related fields. It currently holds a latest impact factor of 3.9 and is ranked Q2 in the Journal Citation Reports (JCR). The journal focuses on cutting-edge research areas including cognitive neuroscience, brain-computer interfaces, and computational neuroscience, providing a vital forum for scholars worldwide to exchange innovative ideas and findings.For more information about the journal and its editorial board, please visit: https://link.springer.com/journal/11571/editorial-board.

Dr. WANG Nizhuan has been invited to deliver a plenary address at the 2025 International Neural Regeneration Symposium (INRS2025), held from October 24-26, 2025. His presentation, titled “From Neural Mechanisms to Clinical Diagnosis: Decoding Brain Disorders via AI-powered Neuroimaging,” will showcase his pioneering research at the intersection of AI, neuroimaging and brain disorders.

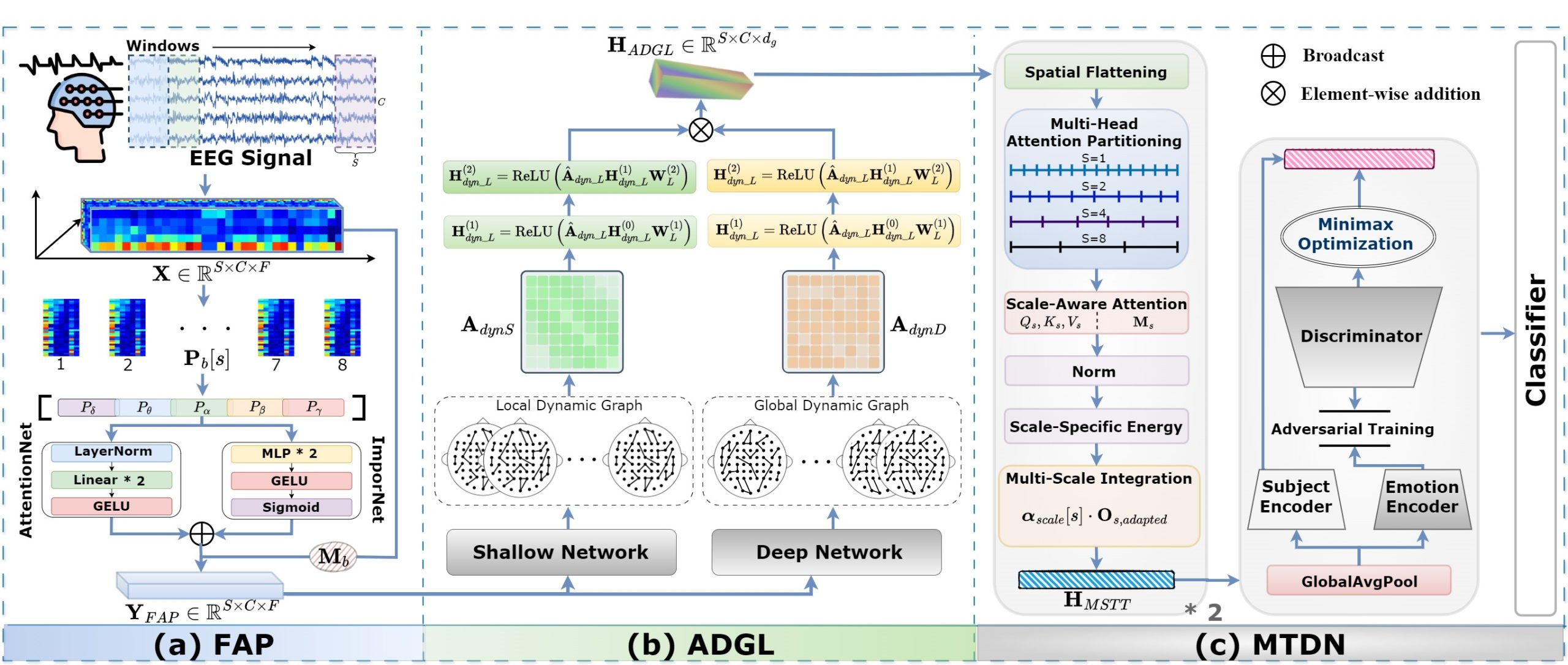

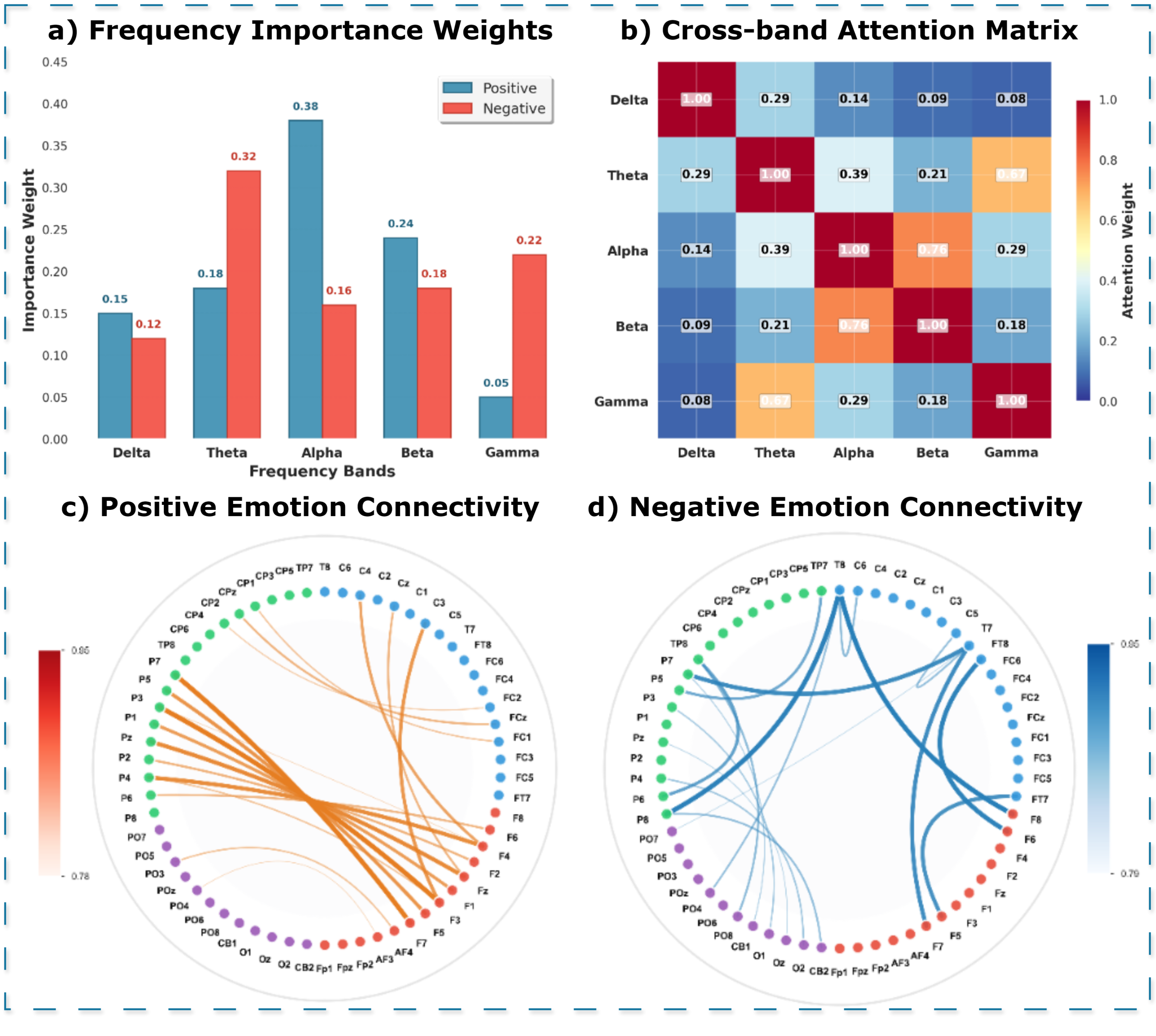

Yueyang Li, Shengyu Gong, Weiming Zeng, Nizhuan Wang, Wai Ting Siok. FreqDGT: Frequency-Adaptive Dynamic Graph Networks with Transformer for Cross-subject EEG Emotion Recognition. The 2025 International Conference on Machine Intelligence and Nature-InspireD Computing (MIND).

Abstract:

Electroencephalography (EEG) serves as a reliable and objective signal for emotion recognition in affective brain-computer interfaces, offering unique advantages through its high temporal resolution and ability to capture authentic emotional states that cannot be consciously controlled. However, cross-subject generalization remains a fundamental challenge due to individual variability, cognitive traits, and emotional responses. We propose FreqDGT, a frequency-adaptive dynamic graph transformer that systematically addresses these limitations through an integrated framework. FreqDGT introduces frequency-adaptive processing (FAP) to dynamically weight emotion-relevant frequency bands based on neuroscientific evidence, employs adaptive dynamic graph learning (ADGL) to learn input-specific brain connectivity patterns, and implements multi-scale temporal disentanglement network (MTDN) that combines hierarchical temporal transformers with adversarial feature disentanglement to capture both temporal dynamics and ensure cross-subject robustness. Comprehensive experiments demonstrate that FreqDGT significantly improves cross-subject emotion recognition accuracy, confirming the effectiveness of integrating frequency-adaptive, spatial-dynamic, and temporal-hierarchical modeling while ensuring robustness to individual differences. The code is available at https://github.com/NZWANG/FreqDGT.

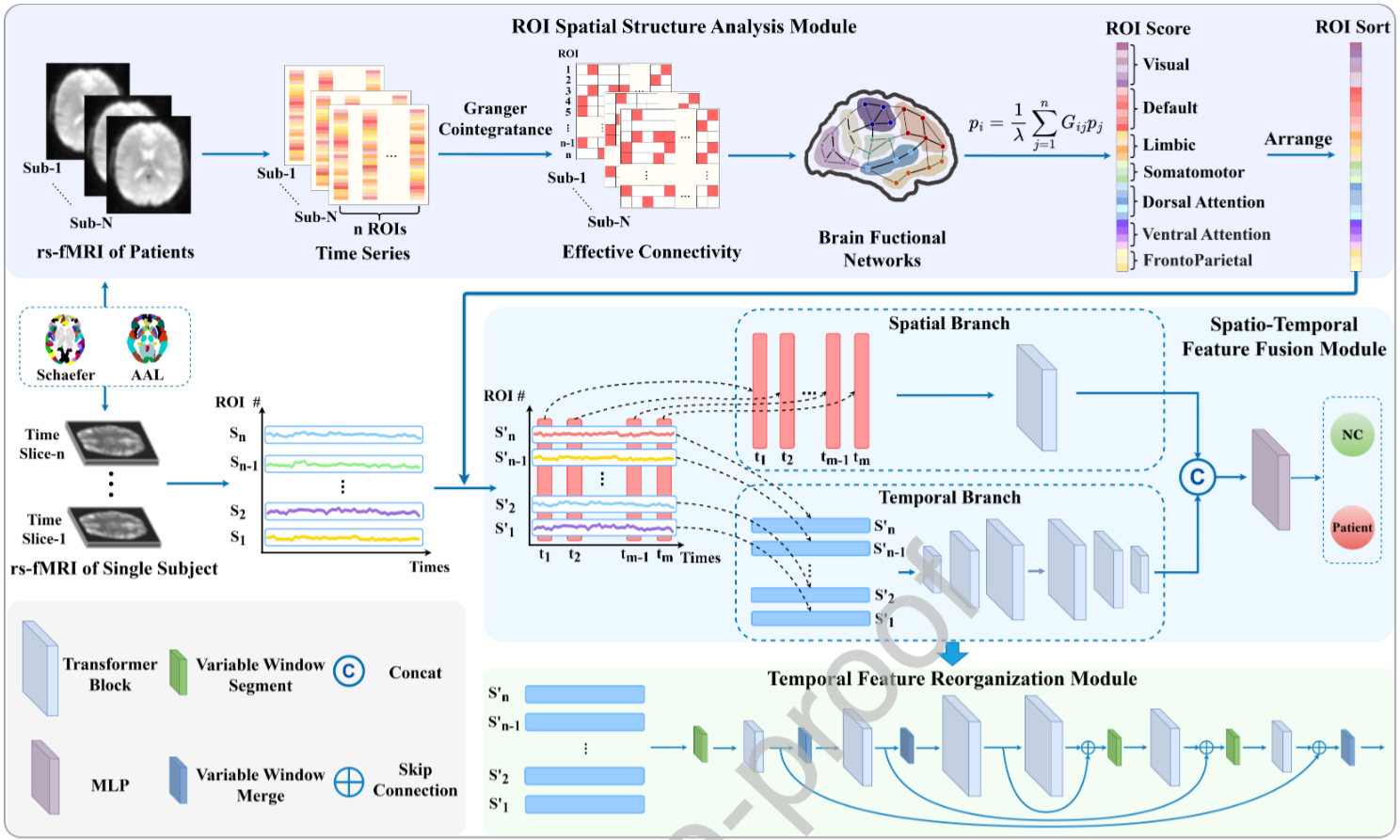

Wenhao Dong*, Yueyang Li*, Weiming Zeng, Lei Chen, Hongjie Yan, Wai Ting Siok, Nizhuan Wang. STARFormer: A Novel Spatio-Temporal Aggregation Reorganization Transformer of FMRI for Brain Disorder Diagnosis. Neural Networks (2025): 107927.

Abstract:

Many existing methods that use functional magnetic resonance imaging (fMRI) to classify brain disorders, such as autism spectrum disorder (ASD) and attention deficit hyperactivity disorder (ADHD), often overlook the integration of spatial and temporal dependencies of the blood oxygen level-dependent (BOLD) signals, ….

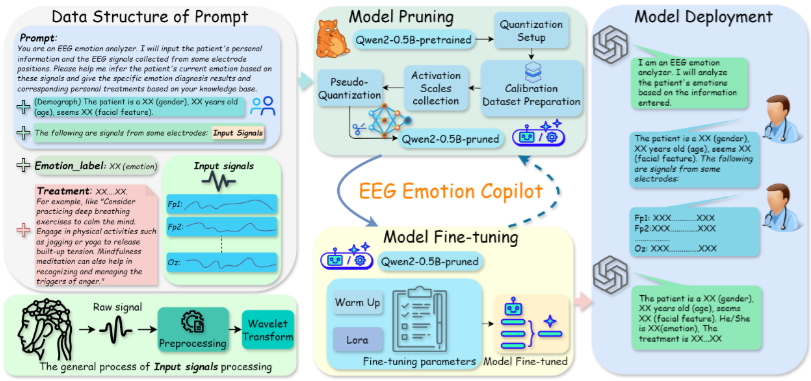

Hongyu Chen, Weiming Zeng, Chengcheng Chen, Luhui Cai, Fei Wang, Yuhu Shi, Lei Wang, Wei Zhang, Yueyang Li, Hongjie Yan, Wai Ting Siok, Nizhuan Wang. EEG emotion copilot: Optimizing lightweight LLMs for emotional EEG interpretation with assisted medical record generation. Neural Networks (2025): 107848.

Abstract:

In the fields of affective computing (AC) and brain-computer interface (BCI), the analysis of physiological and behavioral signals to discern individual emotional states has emerged as a critical research frontier. While deep learning-based approaches have made notable strides in EEG emotion recognition, particularly in feature extraction and pattern recognition, significant challenges persist in achieving end-to-end emotion computation, including rapid processing, individual adaptation….